Ambitions

In this research project is a scientific and technical innovation are developed for anomaly detection in networks for a new generation of early warning systems, the three challenges addressed:

- Reliability and high recognition rate of anomaly detection,

- Privacy and

- Real time analysis of large data sets

These different approaches to the collection of information are optimally combined to achieve the desired target. The issues discussed here are divided as follows:

Initially, a suitable way of collecting information about the network traffic are developed. This should satisfy three properties:

- Richly detailed description of network traffic at all layers of the communication stack

- Resource-efficient collection and storage of useful meta-information from the communication data

- Observing and complying with data protection issues

There are different approaches to the collection of information that are especially designed for networks with high bandwidth. Examples of these are sFlow and NetFlow. sFlow samples packets on the network line to fixed sample strategies, and thus, it is a statistical approach. Normally not the complete package will be sampled, but only the first n bytes. In contrast, describes NetFlow individual connections of the network. Each connection is described by certain characteristics, which include, however, no information of the application level. This makes e.g. detecting intrusions problematic. Besides that, there counter-based approaches, which include the presence of certain properties in packets (e.g. the number of IPv4 packets). These approaches work ordered by time window-based. They are also suited to process large amounts of data and to store for long periods. Drawback of these approaches, however, is the loss of detail because they will be lost by the superposition of different communication threads (streams). An example of such a detection system is the internet analysis system.

The scientific and technical concept works basically counter-based. However, rather than just to collect data within a time window of the approach will be extended so that the data is collected additionally flow based. I.e., the data is not only related to be aggregated to a time window. But for every single communication strand, it is collected separately. It will also support not only provided and useful information that will be collected on attacks to OSI layer 4 (TCP / UDP), but also up to the application level. This approach is intended both to ensure efficient collection of information and to provide a detailed and yet another privacy-compliant description of the network traffic. Thus, a counter-based is combined with a flow-based approach to achieve a much higher detection rate than conventional detection systems.

If an optimal information collection system was implemented in the next step, a methodology for the evaluation and classification is developed. Here is to reduce the number of messages that are focused.

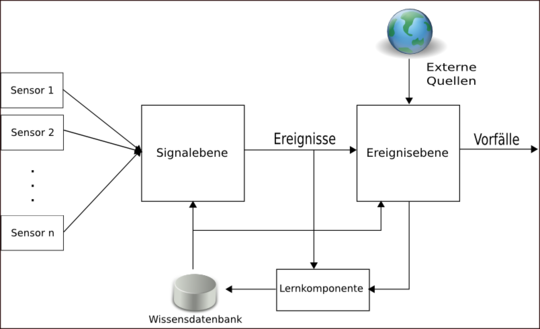

The first step of processing to the flow data grouped is according to various criteria (e.g. by application-layer protocols) to reduce by this information fusion to the amount of data significantly. These groups should be manually reviewed by an analyst and automated by a program and enriched with additional information. The result is a description of the desired normal behavior using a description language. For example, it allows an administrator to decide so that all IRC flows basically for its corporate network as abnormal and therefore apply to be registered as an event. All the groups that were not classified as abnormal should generally examined, with the help of artificial intelligence methods (including probabilistic neural networks and similar methods) for abnormalities. All anomalous flows are to be subsequently reported in live operation the administrator for further analysis. Figure 1 describes the components of an anomaly detection.

Figure 1: Component of an anomaly detection

An important component of the new anomaly detection system (or early warning system) will be a feedback module, which will allow the analyst to review the decisions of the anomaly detection system and thus to improve future decisions. This feedback is necessary because anomaly detection procedures generate many messages that are for a specific application environment without concerning this. The challenge at this point is to let penetrate only the anomalies to the administrator, which are very important for the operation and the IT security of a network. Here, the network management and network security aspects are covered. What's in an environment where relevant, may decide, in most cases only the responsible administrator. His expertise must be gradually put in anomaly detection system to be used by the artificial intelligence. In this context, an administrator has two jobs. For one, he has to identify clusters, which are generally considered abnormal. It will include additional information can be stored to the clusters that describe why a cluster is considered abnormal. Secondly, the administrator must assess the risk level of these anomalies and also be enriched with additional information. In this additional information the administrator is allowed to assign the anomalies of an incident. Incidents can e.g. failure of information systems or attacks on its infrastructure. If an anomaly appears again, the administrator should immediately get an overview of what might be the significance of the anomaly.

Thus only major anomalies reach the administrator, an intelligent filter use the feedback of the administrator to filter out all flows or anomalies, which have similarity to those who were rated as unimportant. Additionally the filter also use methods of artificial intelligence to perform this classification. Anomalies that have been filtered out in this way, will be incorporated into a statistic, which would be reviewed periodically in order to find out whether significant abnormalities were mistakenly removed.

The final aspect is the efficient implementation of the method, so that the use, even in heavy traffic on the network, possible is. These audits encompass the use of GPUs and the use of FPGAs to implement the anomaly detection method (data collection and analysis). In particular, the following questions are answered:

- Can these technologies be utilized for the mentioned purpose?

- Which technology is the most appropriate for the individual processing planes ?

- Where are the limits?